The source notifies the ETL system that data has changed, and the ETL pipeline is run to extract the changed data.

every time the ETL pipeline is run), only the new data is collected from the source, along with any data that has changed since the last collection. At each new cycle of the extraction process (e.g. Each extraction collects all data from the source and pushes it down the data pipeline.

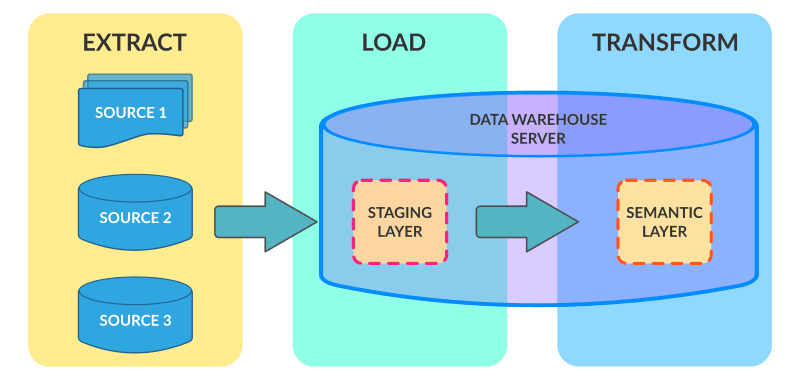

When designing the software architecture for extracting data, there are 3 possible approaches to implementing the core solution: Now let’s look at the three possible architecture designs for the extract process. But today, data extraction is mostly about obtaining information from an app’s storage via APIs or webhooks. Facebook for advertising performance, Google Analytics for website utilization, Salesforce for sales activities, etc.Įxtracted data may come in several formats, such as relational databases, XML, JSON, and others, and from a wide range of data sources, including cloud, hybrid, and on-premise environments, CRM systems, data storage platforms, analytic tools, etc. With the increase in Software as a Service (SaaS) applications, the majority of businesses now find valuable information in the apps themselves, e.g. Traditionally, extraction meant getting data from Excel files and Relational Management Database Systems, as these were the primary sources of information for businesses (e.g. This data will ultimately lead to a consolidated single data repository. The “Extract” stage of the ETL process involves collecting structured and unstructured data from its data sources.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed